The Federal Government has revealed it is considering the introduction of age restrictions for internet use in Nigeria as part of efforts to improve online safety for children.

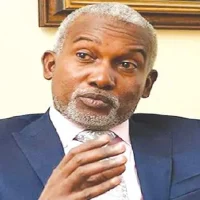

The Minister of Communications, Innovation and Digital Economy, Bosun Tijani, disclosed that the proposal forms part of wider plans to protect minors from the growing risks associated with digital platforms.

According to him, while the internet provides enormous benefits for education, creativity, and communication, it also exposes young users to several dangers.

He explained that children online can face threats such as cyberbullying, harmful or inappropriate content, online exploitation, misuse of personal information, and new risks linked to artificial intelligence tools.

Tijani stated that the ministry is currently assessing different policy approaches to tackle the issue. These options include implementing age-verification systems, strengthening accountability for digital platforms, and expanding regulatory oversight to ensure safer online environments for young users.

He also emphasized that public participation will be vital before any policy is finalized. According to the minister, engaging citizens will help ensure that any framework adopted reflects national priorities, protects children’s rights, and aligns with Nigeria’s evolving digital ecosystem.

In a message shared on X (formerly Twitter), Tijani invited parents, teachers, technology professionals, young people, and other stakeholders to contribute ideas and opinions on the proposed measures.

He said their input would help the government design evidence-based policies aimed at building a safer digital space for Nigerian children.

Earlier developments highlight the government’s increasing focus on regulating online platforms. In August 2025, authorities disclosed that about 13.5 million social media accounts had been shut down for allegedly violating Nigeria’s online code of practice.

The action affected users on platforms including TikTok, Facebook, Instagram, and X.

Technology companies such as Microsoft, Google, Meta Platforms, TikTok and X Corp. reportedly enforced the measures in line with the code jointly issued by the Nigerian Communications Commission, National Information Technology Development Agency, and the National Broadcasting Commission.

A statement from Hadiza Umar revealed that more than 58.9 million pieces of offensive or harmful content were removed from various platforms. The report also indicated that over 420,000 posts were taken down and later restored after user appeals, while 13,597,057 accounts were ultimately closed or deactivated.

Authorities noted that the compliance reports provide insight into how digital platforms are addressing user safety concerns in accordance with Nigeria’s code of practice and their respective community guidelines.

TRUETELLS Nigeria reports.